GPT-5.3-Codex: practical gains for day-to-day full-stack workflows

The release of GPT-5.3-Codex puts coding-focused AI back in the spotlight. Beyond hype, the key question is where it creates real value in a stack that already ships production work.

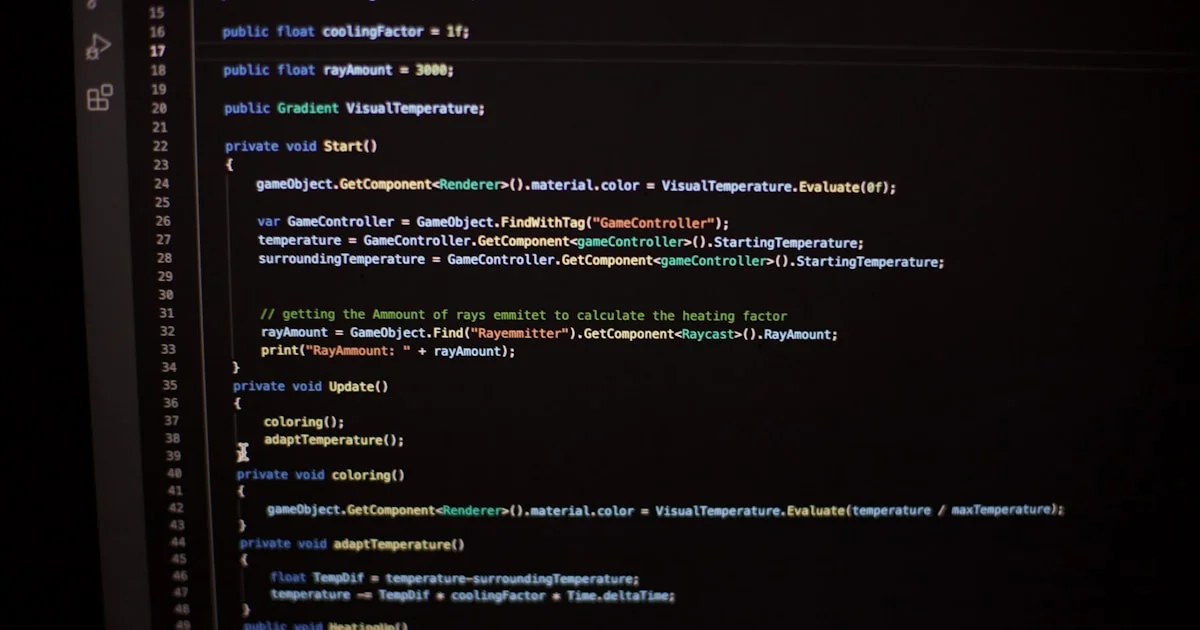

In my workflow with Laravel, PHP, JavaScript, and Astro, the model helps most when it accelerates repetitive tasks without removing technical control.

Where it usually helps

These are the tasks with the best return:

- small and consistent refactors;

- first-draft unit tests;

- data transformation utilities;

- initial technical documentation for pull requests.

The rule is straightforward: let AI propose, let engineering decide.

Where you should not delegate

- architecture decisions;

- security-sensitive changes without manual review;

- critical business logic;

- high-risk migrations involving production data.

The model can improve speed, but it does not replace accountability.

Team integration that actually works

To use GPT-5.3-Codex reliably:

- define which tasks are AI-eligible and which are not;

- standardize prompts by task type;

- maintain a review checklist for generated code;

- measure delivery speed and defect impact.

Without these constraints, gains get lost in longer reviews.

Fit for my blog and portfolio workflow

For technical content sites, this type of model helps with:

- structuring first outlines for articles;

- converting rough notes into draft posts;

- generating code example starters for common topics;

- translating technical drafts while preserving a professional tone.

The value is not auto-publishing AI text. It is reducing editorial friction so more time goes to judgment and quality.

Conclusion

GPT-5.3-Codex can be a strong multiplier for full-stack work if you define a clear operating model. Productivity comes from disciplined use, not blind automation.